Genevieve Bell, founding Director of the Australian National University’s School of Cybernetics, talks to Brunswick’s Alice Gibb.

Above: A dancer interacts with a flying drone in an Australasian Dance Collective performance, an example of a research and education collaboration from the Australian National University’s School of Cybernetics.

If you believe the destiny of the human species is to be servants or even victims of our AI overlords, Genevieve Bell would like a word. She is a Distinguished Professor at the Australian National University and the inaugural Director of the School of Cybernetics, the first new school to be opened at the university in at least 40 years.

The School of Cybernetics is intended to create a space to build new ideas on the relationships between humans, technology and ecology as systems and to develop a vocabulary through which to analyze and adapt those systems. Bell is also a Senior Fellow at Intel, where for 25 years she has been the chipmaker’s resident anthropologist. She holds a PhD from Stanford in cultural anthropology.

“You might reasonably ask, ‘How does a person with that set of skills end up at Intel?’” she says. “And I have a good Australian answer: I met a man in a bar in Palo Alto, and he asked me what I did. I said I was an anthropologist. And he said, ‘What’s that?’ I said, ‘I study people.’ And he said, ‘Why?’ I probably should have guessed he was an engineer at that point.”

From that conversation, she wound up at Intel. “I quit the university system that I understood, and I moved to a town I’d never lived in, to a company whose business model I understood even less well than the technology they were making, to a place that was a cube farm. And it was the single best decision I’ve ever made in my life, and I never regret it.”

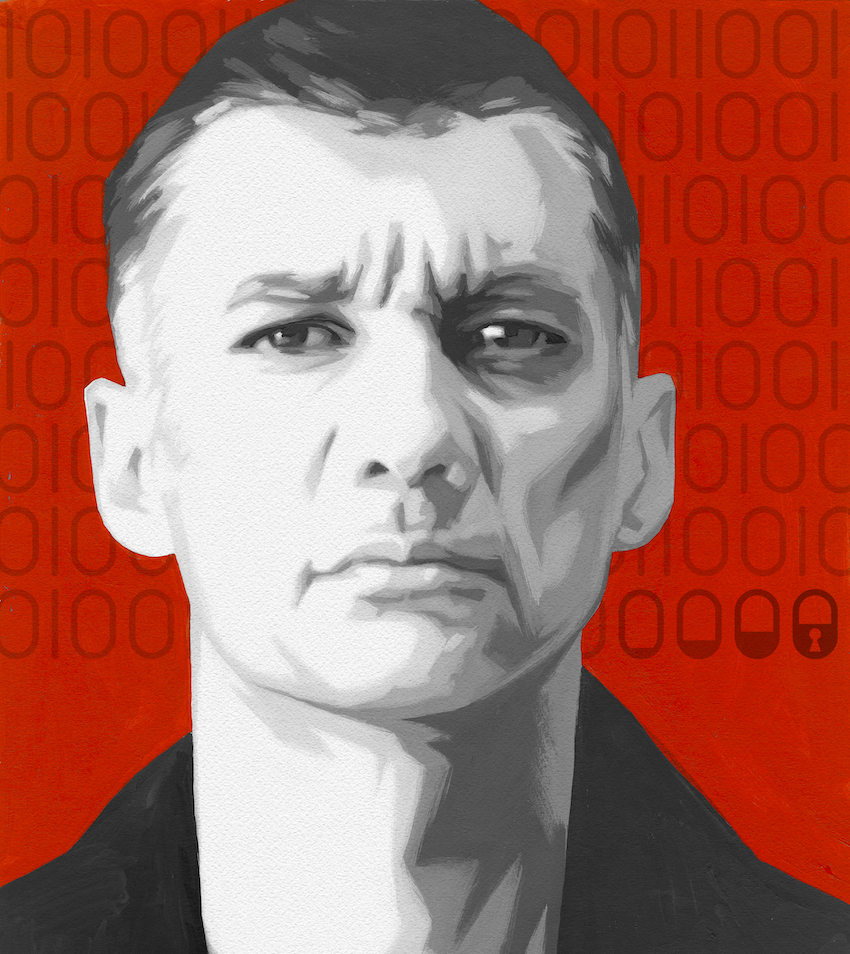

Genevieve Bell

Bell is considered an important global voice in the debate around artificial intelligence and its role in human society and now also serves on the Australian Prime Minister’s Science and Tech Council, a small advisory group across the sciences. She is also a non-executive director on the board of the Commonwealth Bank of Australia, Australia’s largest bank.

The word “cybernetics” was coined by mathematician Norbert Wiener in the aftermath of World War II, when researchers from mathematics and many other disciplines—including Margaret Mead, the famed anthropologist, and John von Neumann, the inventor of the ENIAC, the first programmable electronic computer—were looking at the implications of the West’s growing technological prowess. They sought ways to describe and improve the dynamic relationships involving technology.

“For me cybernetics is theory and practice,” Bell says. “It’s both the theory of and the approach to building complex systems that have humans, technology and the ecology in a constant dynamic relationship.”

The field’s influence on the internet has left us with the prefix “cyber-” to refer to pretty much any digital network activity. But it also spreads into many other fields, including social dynamics, climate studies and creative projects as far flung as rock star David Bowie’s famed Berlin trilogy.

“All of our big problems are really systems problems.”

The systems focus and collaborative, multidisciplinary approach of cybernetics’ founders are reflected in Bell’s drive to turn her new school into an arena for diverse ideas.

“One of the joys of being an anthropologist in a company of engineers is being on teams that never looked or sounded like me,” she says. “You learn to find comfort and indeed celebrate leading teams that you can’t use shorthand with. Because you don’t have shared experiences or reference points.”

What does cybernetics have to offer to the 21st century?

All of our big problems are really systems problems. Whether it’s vaccine rollouts, masks, policies, or ships stuck in the Suez Canal shutting down parts of global trade, distributions of everything from toilet paper to pasta—all of those felt like systems problems. Systems only become really visible when they’re not working. There’s a whole series of systems that are hugely important in most of our lives, and we don’t have the vocabulary for talking about them.

Cybernetics provides a vocabulary for thinking about complex dynamic systems that are always about the relationship between the human, the ecological and the technical. So it’s never just the people or the technology, but it’s about the interplay of all of that stuff. As leaders, we’re not necessarily trained to see things as a system or think about, “If this is a system, where are its edges and where are my points of intervention, leverage and control?”

“Systems only become really visible when they’re not working.”

How do you see the work in the 1940s serving as a model for leaders now?

For me there are three big ways. First, in the 1940s, they understood the notion of iterative-ness. You’re going to prototype or iterate your way to the answer.

In the 21st century, that process is contrary to what we’re told—move faster, work smarter, get stuff done. One of the lessons for me from this particular crew was, speed is fine, but you actually have to come back to conversations over and over again. The first answer isn’t the best answer, and there’s something about the ritual of conversations that unfolded.

Second is this notion of a plurality of voices in the room, what that meant. People were going to argue, and arguing was a feature, not a bug. You wanted to create space for productive discomfort, where you were going to have to be sitting with ideas that didn’t sound like yours from people who didn’t sound like you and often didn’t look like you—multiple contested ideas in the room, over and over. Being uncomfortable can be a productive space out of which new ideas might be generated—not always, but they can be.

The third point for me is a notion of grace. You wanted to make an idea that was strong enough that it would hold its form to get from your hands to someone else’s, but not so rigid that it discounted someone else being able to do something else with it. They hoped they were building ideas that would last 100 years. They hoped they were building rules that people would go and do something with—strong enough to hold their own, but not so much that it resisted being changed.

The engineering version of that is “strong opinions lightly held.” You need to hold ideas with grace and the ideas themselves must be graceful enough to survive challenge and adaptation.

Line Study

Distinguished Professor Genevieve Bell was chosen to deliver the inaugural Ann Moyal Lecture of the National Library of Australia, a series showcasing contemporary questions in various fields of knowledge. Bell’s talk focused on the Overland Telegraph Line, built in the 19th century to connect Australia, and also, via undersea cable, connect the continent with the rest of the world. Dismantled in the 1970s, the Line represents a discrete system that engaged vast global networks of supplies, cultures, disruptions and transformations—a case study in interrelationships of technology, people and ecology. Bell zeroes in on one site, a “repeater” station, Strangways Springs, that became a colonial town full of different cultures, one that involved and disrupted the ancient community of Aboriginal people. Her talk is replete with the names and personalities of people, families, animals and places.

“By grounding this study of the Overland Telegraph Line in particulars,” she said. “I hope to remind myself that any large system—any cybernetics systems—will unfold somewhere, with someone, and that those somewheres and someones matter a great deal.”

With this small patch of a large tapestry, Bell reminds us that no robust system is ever two-dimensional, but extends out in all directions, intersecting other systems with their own networks. It offers lessons that apply equally well to the internet and metaverse.

“Part of the reason for wanting to unfold a story like this is that it’s pre-digital, but it’s all the same pieces.”

Have we lost some of that, both the collaborative spirit and the broader view on critical technology?

Yes, it is startling to me, and I have been thinking about it a lot recently—the move from cybernetics to artificial intelligence in particular. From talking about complex dynamic systems that are about the relationships between the human and the technical, we went to, “We’re just going to make the machine simulate the brain.” I feel like something got lost in that moment.

We’re in the very early days of AI, so it’s hard to judge. But we’ve started from the notion that we can break things down into small enough pieces that the machine can do all the work, and you don’t ever have to think about the corpus or the whole. It leads you to be careless in some ways about how the pieces all fit back together again. It would be a bit like talking about food by giving people ingredient lists and never talking about the finished dish.

Because it isn’t just about the pieces, it’s about the whole, and about the whole’s relationship to other things. That feels to me like a very hard conversation we need to have and a deeply urgent one. On the other hand, we’re open to more voices in the conversation than they were then.

“It isn’t just about the pieces, it’s about the whole.”

Are you worried about AI and generative AI tools like ChatGPT?

I was in a room full of very smart people the other day, who were basically trying to explain to me that the robots were going to kill us all, because it was inevitable.

Let’s just pause for a minute. We all grew up steeped in science fiction stories, which have a very particular vocabulary: The computational object understands us, but is going to kill us. Suddenly we have this technology that feels uncanny and familiar because we’ve grown with up with those stories.

But that doesn’t mean the story that we grew up with is true. Those stories were one of the ways we actually played out our anxieties. We’re not doing ourselves a particularly good service imagining those stories are true.

We need to remember they were stories we were telling ourselves about what might happen, not what was going to happen.

Are you optimistic or pessimistic for the future?

I always think I’m not either of those things. I’m just committed to building a future I want to live in, a future that is going be, well, better than the present in which we find ourselves.

Alan Kay, who was at Xerox, used to say, “The only way to predict the future is to build it.” If you want an optimistic future, just build it. The future is absolutely makeable, and we make it every day. So there’s something about having some agency in those conversations that feels really important.

At the Helm

“Cybernetics” comes from the Greek word, kybernētēs, to pilot or steer, an image that reflects the founding group’s concern for environmental feedback informing the construction, control, responsiveness and responsibility of emerging technology. For Norbert Wiener, who coined the word, humans stand at the helm of their new technology and must be able to guide it in the real world, taking into account environmental factors.

Beer to Bowie

Producer Brian Eno was David Bowie’s principal collaborator on the groundbreaking albums, Low, Heroes and Lodger, all recorded in Berlin in the late ’70s. Eno applies cybernetics principles in the recording studio, inspired in part by the work of British organizational psychologist Stafford Beer, who said, “Instead of trying to specify [a goal] in full detail, you specify it only somewhat. You then ride on the dynamics of the system in the direction you want to go.”

How does all this manifest in your work at the School of Cybernetics?

We’re really interested in collaborations, for one thing. We’ve worked on how to partner with various organizations in really different ways—with the National Library, the National Gallery, Meta and Google, for example.

We’re collaborating with the Australasian Dance Collective in a series of performances involving people and drones dancing together. Professor Alex Zafiroglu is the lead researcher on that. It’s a completely different way of thinking about what drones can do, not drones as fantastical spectacles but drones and the human body. So, how would we think about this, not just human-computer interactions, but rapport and empathy between computational objects and people? The dancing does a delightful number on your head. That’s been fabulous to watch.

There has been a lot of talk of late about how you would detox AI. One of the real virtues of cybernetics is that it insists that you have humans in the conversation; it is about the relationships between humans and computing, not humans as a thing you’re engineering out of the system or something you put in at the end.

Certainly, the way we’ve enacted cybernetics here is rebooted for the 21st century. We’ve been really determined about what it should look like here in Australia. Where I am currently is on the lands of the Ngunnawal and Ngambri peoples here in Canberra, Australia. These lands have been occupied and lived on for more than 20,000 years. The largest technical systems near me were built 20,000 years ago and were probably used last weekend—a very large set of fisheries that were built a very long time ago.

So we’re always really acutely aware that we are talking about building the future in a place where people have been building the future and imagining systems for 60,000 years. All of our work proceeds from the fact that we’re in a place where that’s both our legacy and our responsibility. We graduated the first Aboriginal person with a master’s degree in the college’s 50-year history. I promised her on the day she graduated that she would not be the last—and she won’t be.

As a very small child, my mother sat me down and explained to me, “You have a moral responsibility to make the world a better place. And it has to be better not just for yourself but for everyone else.” That usually means, better for the people who couldn’t find their ways into the rooms where those decisions were being made. You were responsible for making sure that the world was more fair and more just.

That’s what we’re working toward.

More from this issue

People Puzzle

Most read from this issue

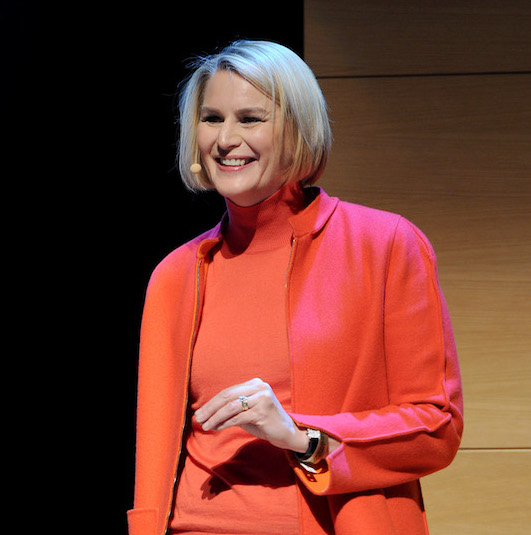

Katy George